Home / Case Studies / AI / Private LLM legal assistant cuts manual document review time by 80%

Private LLM legal assistant cuts manual document review time by 80%

AI and Machine Learning

The

Overview

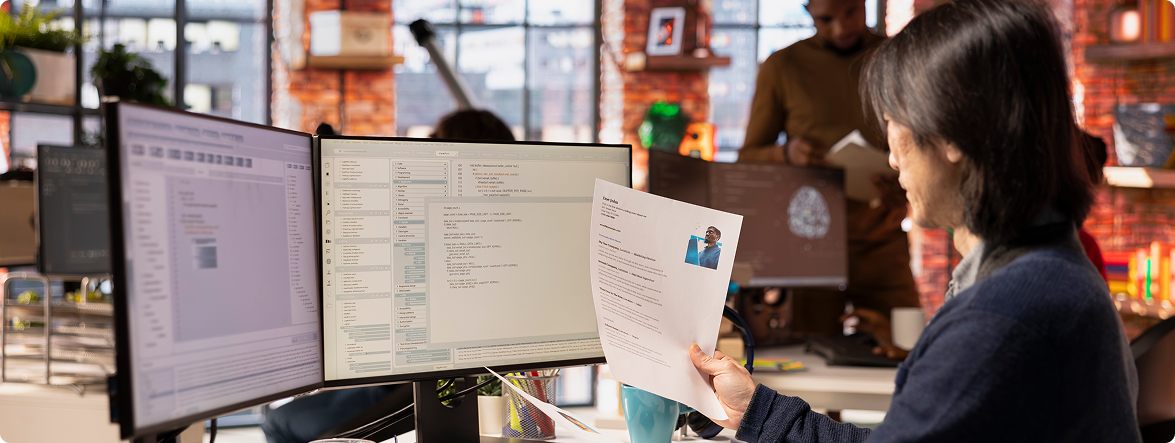

An enterprise legal team was drowning in manual document reviews. Wishtree partnered with them to build a private, domain-tuned LLM agent that uses document embedding and generative Q&A to deliver instant answers.

Problem

Statement

The client’s legal team was overwhelmed by manual document reviews. Each request took hours of searching, reading, and cross-referencing – time that could have been spent on higher-value legal work. Public AI tools were off-limits due to strict data privacy and compliance requirements.

Highlights

80%

Time savings per request

<5%

Hallucination rate

Full audit trails with AWS CloudTrail

<5% hallucination rate

GDPR & SOC 2 compliant

Secure, private deployment

Agentic AI refers to autonomous, goal-driven software agents that act with

limited human input to optimize specific goals like pricing, forecast demand,

and detect fraud in real time.

About Client

A global enterprise with thousands of legal documents. Legal staff spent hours manually searching and reviewing documents for every compliance request, slowing down business operations and creating bottlenecks.

- Legal staff spent 3-5 hours per request manually searching through thousands of documents.

- Inconsistent answers across team members led to compliance risks.

- Public AI tools could not be used due to data privacy concerns and regulatory restrictions.

- Growing document volumes made manual processes unsustainable.

- GDPR and SOC 2 compliance required strict controls on data access and processing.

- Built a private, domain-tuned LLM deployed entirely within the client’s secure environment.

- Created document embeddings that index all legal documents for instant semantic search and retrieval.

- Developed a generative Q&A interface that answers natural language questions based on retrieved document context.

- Implemented strict access controls and audit logging to meet GDPR and SOC 2 requirements.

- Tuned the LLM on the client’s specific document types and legal terminology to minimize hallucinations.

- Delivered a user-friendly chat interface for legal staff to ask questions and get answers instantly.

- When a legal staff member asks a question, the system first searches all indexed documents using semantic embeddings to find the most relevant passages.

- The retrieved context is fed to the private LLM, which generates a precise answer based only on those documents.

- Every answer includes citations showing exactly which documents and sections were used, enabling quick verification.

- The system achieves <5% hallucination rate through domain tuning and strict context grounding.

- All queries and responses are logged with full audit trails for compliance review.

Bedrock

KMS

Hibernate

Hibernate

Cloudtrail

Cloudtrail

Hibernate

Hibernate

Hibernate

SageMaker

Hibernate

ECS

RDS

QuickSight

Hibernate

Hibernate

Hibernate

ECS

Hibernate

Hibernate

Hibernate

SageMaker

Hibernate

Hibernate

Hibernate

Kinesis

Core Features

Private, domain-tuned LLM

Generative Q&A interface

Full audit logging

Citation & verification

Strict access controls

Impact

- 80% time savings per request

- <5% hallucination rate

- Fully compliant with GDPR and SOC 2 standards

- Instant answers

- Citations with every response

- Strict access controls

- Audit logs for every query

Why Wishtree

Wishtree builds private, secure AI solutions for regulated industries. where data privacy and compliance are non-negotiable.

For this enterprise legal team, we:

- Delivered 80% time savings with private, domain-tuned LLM

- Achieved <5% hallucination rate through careful tuning and grounding

- Ensured full GDPR and SOC 2 compliance from day one

- Built a solution that legal teams trust with citations and audit trails